This site focuses on the use of the use of the Kinect to control theatrical lighting. It will be in constant development as more information is gathered.

Below is an overview of the project.

Interactive Technology, Lighting, Perception, and the Actor

Origins

This project, like many other advances in lighting technology, began with a discovery but without the proper knowledge of implementation. In the theater, we often look at a piece of equipment and think of a way to repurpose it. That concept is the basis of props and the reason that water pipes are used to support lights in the automated rigging of most theaters. I saw the benefits of precise motion tracking in the theater and thought that if a lighting designer could harness it then the “theater magic” of precise cueing can become real magic on stage. Yes, an actor could control their entire lighting environment. As a lighting designer, technician, director, and performer, I wanted first and foremost to learn how to utilize this capability and discover what its limitations were.

Doings

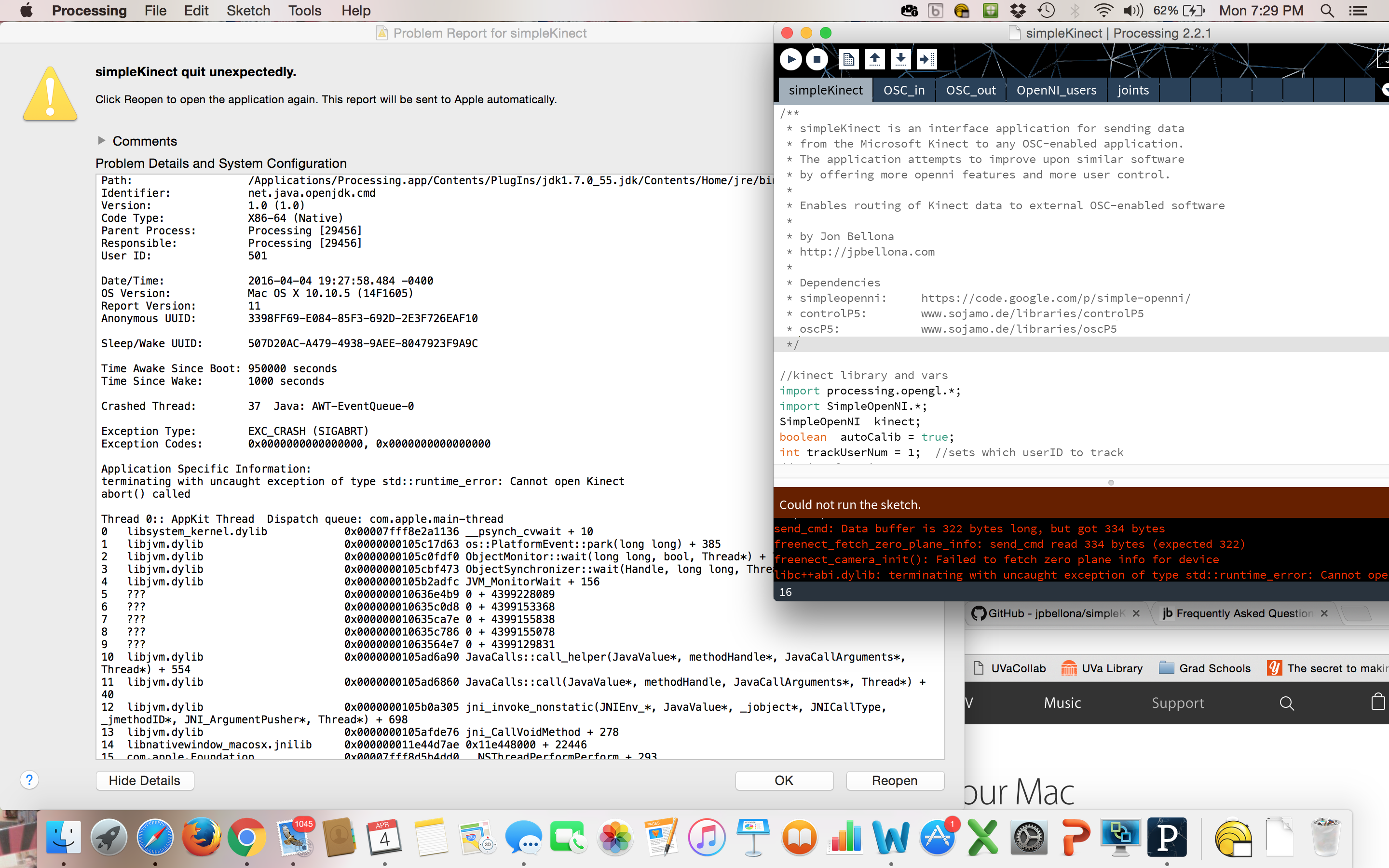

The process that I had planned changed since my proposed idea. I shortly learned that programming is difficult and that it would take a certain amount of time to learn to program from scratch. So I took a fantastic introductory course in the fall with Associate Professor Kevin Sullivan in the Computer Science Department and dreamed big, trying to learn what I could about the Xbox Kinect and the Myo Armband. I left the fall with a nice foundation with which to start on the computational level as well as a stronger understanding of how the ETC light boards work. During the spring semester I worked closely with the lighting design MFA candidates in the University of Virginias’s Department of Drama as well as with Jon Bellona from the Department of Music to figure out how to use Simple Kinect, MAX/MSC and the ETC Eos in tandem through Open Sound Control. The feeling of this breakthrough was extremely rewarding.

Perception and the Actor

During this project, I had to dig into a mixture of cognitive and computer sciences. This was essential to my work as an artist. It is never enough for me to hand a program over to a director and say, “Here, just make sure they do this gesture.” I wanted this project to create a tool for actors, and for that to work, they need to be able to perceive the world as their character and as the camera/armband.

I learned that perception only works when you have a way of storing what you perceive and a method of communicating what you perceive to yourself and others. The key quickly became finding a route for communication. The Kinect’s camera does not actually take pictures in the way that a Nikon does. It uses infrared light that shoots out in a cone from the lens and hits an object, bouncing back to the lens from there. Once hitting a body it will cast a sort of shadow over all of the space that the infrared light cannot hit because of the body’s presence. The Kinect then receives the location of many dots in space. This allows the Kinect to create a visual separation between objects on a screen. The Myo Armband works by reading the fine muscle movements in the arm as one makes various gestures. The Kinect and Myo both produce waves of data as their “language.” The Myo utilizes EMG (Electro MyoGraphic) waves and the Kinect uses OSC (Open Sound Control). The names of these waves sound as pretentious as they are complicated, so for our purposes we will just think of them as the waves that a radio station sends for our music-listening pleasure. Like listening to music in a car, this process involves the device sending information along a radio channel. There are many radio stations after all, and you need to be able to tune into a specific station to receive the right messages. The computer tunes into this station by choosing a “Port.”

Once at the computer, language conversion must happen. The ETC Eos is a very intelligent light board and recently upgraded its abilities so that it can receive Open Sound Control messages, but these messages are specific to the light board, they are not the same OSC messages that the Xbox Kinect creates and are a completely different type of info from the Myo Armband. So I learned how to use a visual programming language through MAX/MSP, a program that is very well known among sound designers. In MAX, I can teach my computer how to speak in the same language as the Kinect and the Myo and receive their data. Then I can manipulate it in language that I personally understand and translate it to a language that the ETC Eos will understand. The Lighting Board is just another computer that tells the lights what to do and then we finally get feedback into the real world as the lights change.

I have determined during this process that if an actor or director wants to use this device, they must understand everything about this system. It is not always exact and it is not always intuitive, so they must understand the entirety of the tool.

Lighting

Over the course of this project I began to realize that my biggest limitations were only my ability to program and the capabilities of the ETC Eos. The Eos is luckily the fixated problem. There are many commands provided by ETC through OSC messages. In fact, there are commands for each individual key that someone can physically press, but it is not fully intuitive, and these commands are new. Luckily, they can improve with each new update of ETC’s software.

In regards to my programming ability, that is the most dynamic change that I can make. I started this whole process knowing absolutely nothing about programming. By this point in time I have managed to communicate between the different products mentioned in this report and am constantly learning more about what I can do. Every day is an opportunity to learn more about coding and that limitation crumbles with every new discovery. So what then is the limitation for a lighting designer? Perhaps the only limitation is their creativity and ability to create a theory on paper before they bring it to a program.

One very valuable tool for a lighting designer is the ability to track actors on stage. Through a very convoluted set of commands in MAX, I can create zones on stage and a system that detects when actors enter and exit these zones. Lights that are focused on those zones can be made to turn on when an actor reaches that area and back off when they leave. In many cases a lighting designer needs to light the playing space for the actors to be seen, but they do not want to have every light in the theater shining so that light reflects off of every surface of the set. This methodology is ideal for controlling light without writing a mass number of cues. It also provides the actors with less limitations, they can go anywhere on stage that their character would want without feeling confined by the requests of the designer. This idea will ultimately lead to breakthroughs in the theater.

Obstacles

The most important thing that I learned from this process is that technology moves rapidly, almost too rapidly. Because devices are not developed to do this job for the theater, the designer or technician needs to “hack” their way towards their end goal. I never thought of myself as a hacker in any way until I was halfway through this project. At that point I realized that the Kinect is not meant for anything more than communicating with the Xbox. However, many people saw its potential and have done what they could to make it work on their computer. I had to fumble through many long nights to find the right software online to make specific pieces of hardware work for me.

The bulk of Kinect research happened a couple years back, many of the sites that held information on the topic have expired since and my computer’s operating system has renewed 5 or 6 times. Certain models of the Kinect only work with certain programs out there. The type of light board that one is using may not have the physical capabilities of what the hacker may want to do. All of these variables will keep on changing. I had the pleasure, though, to learn how to deal with these obstacles. The best way to overcome the obstacles is to reach out for help. Fellow hackers love to learn from one another, and the most rewarding experiences thus far were the ones that I spent with Jon Bellona in Music and Matthew Ishee in Lighting where we mutually discovered kinks in our plans and how to fix them.

After encountering all of these technical difficulties, should a director and lighting designer agree to use technology like this? Absolutely. They should use it, but only if they have ample time. It became clear to me that a lighting designer could find use for this technology, but they would only have a few weeks to get it to work. I hope to make my story available online so that others can see the way that I accomplished my goals at michaelgiovinco.com. It is pertinent for a designer to understand their tools before they can begin their craft. Only then can they work with the director and actors to implement this technology on stage.

Thank you to the all of the supporters of the Miller Arts Scholars program, with a special thanks to Sandy and Vinie Miller and Evie and Stephen Colbert. Without your support, this research would not have been possible